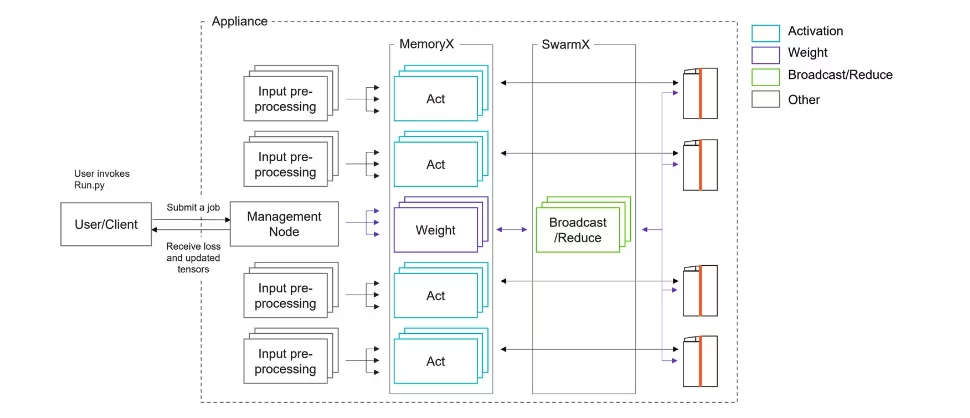

Cerebras markets the CSE-3 as a wafer-scale accelerator and surrounding system. Cerebras organizes its systems as shown:1

From left to right in the above diagram:

- User/client nodes are the external workstations or login nodes to which users SSH

- Management nodes are CPU nodes for resource allocation

- Input pre-processing nodes are CPU nodes for computational tasks. I think these are also what mount external storage.

- MemoryX are x86 + DRAM + optional flash appliances for storing model weights. They are packaged into 12-node enclosures.

- SwarmX are x86 appliances for moving data to/from individual accelerators. They are packaged into 12-node enclosures.

- WSE (unlabeled) is the wafer-scale accelerator and its giant liquid cooling infrastructure.

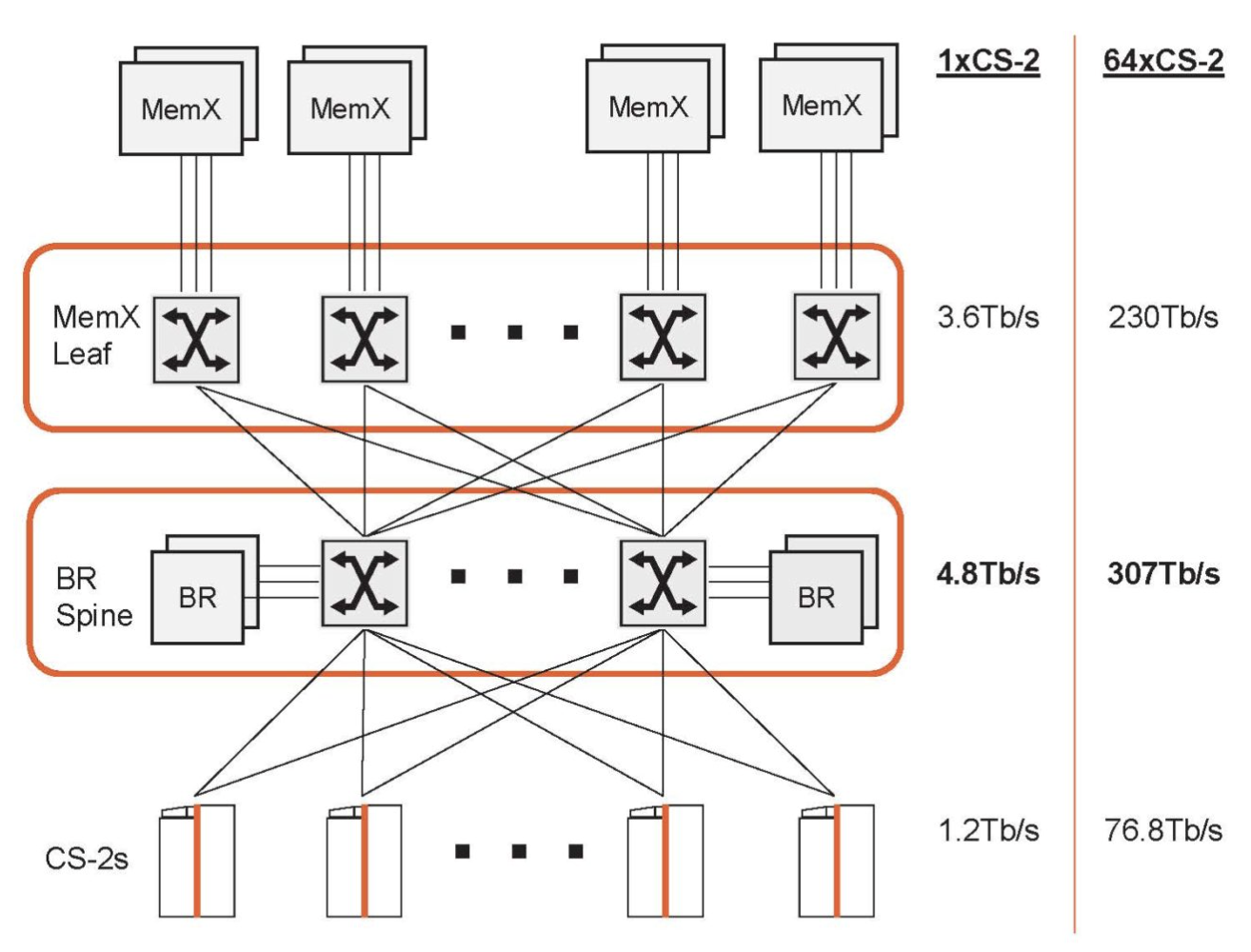

These components are networked as shown:2

where

- “MemX” is a MemoryX node/enclosure

- “MemX Leaf” is a MemoryX switch

- “BR Spine” contains SwarmX nodes/enclosures and SwarmX switches

- “CS-2s” are the wafer-scale accelerators which are directly attached to the SwarmX switches via 100 GbE.

WSE-3

A WSE-3 wafer-scale accelerator has3

- around 900,000 cores total4

- 84 dies, each with about 10,700 cores

- 44 GB of SRAM evenly spread across cores

- 21 PB/s of memory bandwidth between cores and SRAM

- 1.2 Tb/s (150 GB/s) external connectivity

- 12x100 GbE (4x QSFP-DD 4x100G)

- Wafer is directly fabric-attached

Each WSE-3 core has:

- 48 KiB of SRAM

- 512B of cache

- 16 general-purpose registers

- 48 data structure registers

- 8-way FP16/BF16 SIMD

- 16-way INT8 SIMD

WSE-3 scales up to 2,048 accelerators in a single system.5

As best I can tell, WSE-3 gets most of its performance by doubling the SIMD width per core over WSE-2. SRAM increased by 10%, and core count increased by around 6%.

MemoryX

The CS-2 MemoryX node had:6

| Resource | Capacity per node | Capacity per unit |

|---|---|---|

| Nodes | 1 | 12 |

| DDR DRAM | 128 - 1024 GB | 1.5 - 12 TB |

| Flash | 0 - 500 TB | 0 - 6.0 PB |

| CPU cores | 32 | 394 |

| NICs | 2x100 GbE (RoCE) | 24x100 GbE (3 TB/s) |

CS-3 has a few SKUs of MemoryX:76

| SKU Type | DRAM+Flash |

|---|---|

| Enterprise | 1.5 TB |

| Enterprise | 12 TB |

| Enterprise | 24 TB |

| Enterprise | 36 TB |

| Hyperscale | 120 TB |

| Hyperscale | 1,200 TB |

Cerebras does not disclose how much of these quantities is DRAM versus flash.

Storage

Cerebras says little about integrating external storage into CS-3 systems, but DDN has its own reference architecture8 which connects to the input pre-processing nodes’ 100 GbE network. They show a rack diagram that looks like this:

where

- each 2U storage enclosure has 8x 100G connections

- each Input Pre-processing Node probably(?) has 2x 100G connections

System

A single Cerebras scalable unit is customizable but may consist of:9

- 1x Cerebras WSE accelerator

- 8x input pre-processing servers

- each containing 1 node

- each with 1x or 2x 100 GbE connections per node?

- 3x MemoryX “enclosures”

- each containing 12 nodes

- each with 2x 100 GbE connections per node (24 total)6

- 1x MemoryX switch

- 1x SwarmX “enclosure”

- each containing 12 nodes10

- each with 5+1 100 GbE connections

- 2x SwarmX “switches”

- 1x storage enclosure (2x controllers + 24 NVMe drives)

- 1x management switch

- 1x console server

This implies…

| Component | Network | Bandwidth | Bandwidth |

|---|---|---|---|

| WSE-2 | 12x100G | 1.2 Tb/s | |

| MemoryX | 72x100G | 7.2 Tb/s | |

| SwarmX | 60x100G | 6.0 | |

| Storage | 8 100 GB/s show show show show |

Patrick has shown pictures of Cerebras’ hero deployments of WSE-2 and WSE-3, which appear to show twelve nodes per rack of general-purpose Supermicro and HPE servers.5 It’s not clear what those are.

Footnotes

-

The physical wafer has around 970,000 physical cores, but they disable a bunch to improve chip yield. 100x Defect Tolerance: How Cerebras Solved the Yield Problem - Cerebras contains a little more information. The 900,000 is probably just how many they choose to enable for their shipped part. ↩

-

Cerebras WSE-3 AI Chip Launched 56x Larger than NVIDIA H100 ↩ ↩2

-

Detail of the Giant Cerebras Wafer-Scale Cluster - ServeTheHome ↩ ↩2 ↩3

-

Cerebras CS-3: the world’s fastest and most scalable AI accelerator - Cerebras ↩

-

Detail of the Giant Cerebras Wafer-Scale Cluster - ServeTheHome ↩